Why I Didn't Launch AI Channels

High costs and slow response times with GPT-3 made AI Channels impractical as a consumer product, so I prioritized learning and joined OpenAI instead of launching.

Prior to joining OpenAI in the summer of 2020, I spent a lot of time thinking about releasing my own AI application on top of GPT-3.

I’d been given early access to the model, and I basically went down every rabbit hole I could find:

- How do you manage long contexts when you only have ~2,000 tokens?

- How do you make a chatbot remember key facts?

- How do you do “tool use” (even if we weren’t calling it that yet)?

- How do you route different user intents to different behaviors?

I was obsessed with techniques—prompt switching, “gating” (using a cheaper/smaller step to detect intent and then handing off to a bigger step), and having the model decide whether a user request should trigger something like searching the web, storing a memory, or looking something up.

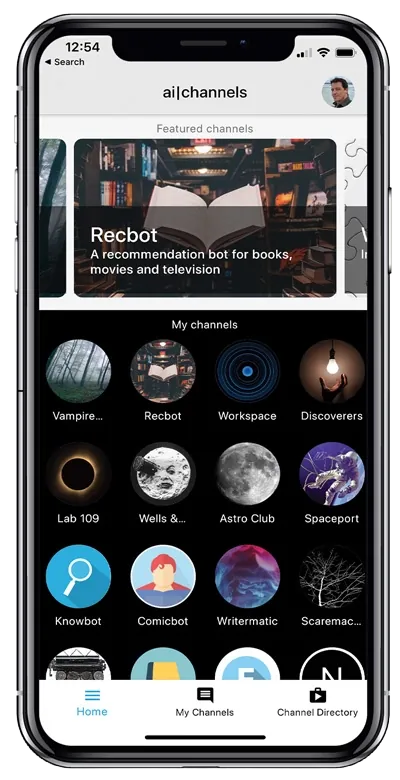

Out of that came an app I built called AI Channels.

It was a wildly ambitious app for 2020, given where the models were. At the time, “RAG” wasn’t even a term yet—it wouldn’t be described until later in a research paper—but I was already doing the basic idea: pulling in relevant context from other data, inserting it into the prompt, and using that to generate answers.

The core concept was simple: different bots for different tasks—play games, search the web, do work, just chat—each living in its own “channel.” In other words: separate conversations for separate purposes, instead of throwing everything into one endless thread. In some ways it looked a lot like what people now experience with ChatGPT and GPTs.

Internally at OpenAI, it was very well received. In fact, it was chosen as a showcase app when GPT-3 launched, because it demonstrated a lot of capabilities in one place. Greg Brockman and others asked me if I planned to release it as a real app.

I didn’t.

In retrospect, given that products with similar ideas came later and got extremely valuable, you could call that a billion-dollar mistake. Maybe. But you have to understand where my head was at then.

First, I was a self-taught programmer. I never took a computer science course. I learned from online courses—Udemy, “30 Days of Ruby,” that kind of thing. My entire exposure to software came through videos and messing around on my own.

I didn’t know programmers. I had maybe one friend who coded, and he lived in another part of the country. At the time, the first software engineers I really ever met and spoke to in depth were the ones at OpenAI. I was also living in LA, where basically everyone I knew was in entertainment—actors, directors, screenwriters. Not engineers.

So the practical reality was: if I launched something and it started to work, I didn’t know how I’d scale it. I didn’t know how to hire. I didn’t have a network. I could’ve tried to build that network, sure, but it wasn’t the mindset I had at the moment I was making the decision.

But the bigger reasons were speed and cost.

Let me be clear: the intelligence wasn’t the problem. GPT-3 could do a lot if you were willing to be clever. With the right routing, prompt switching, background steps, and “use a smaller decision step before the bigger generation step” strategies, you could squeeze a ton out of it. Functionally, AI Channels was highly capable. It could maintain long-ish conversations using the tricks available at the time, and it could do a version of tool use.

The problem was that it was slow.

Responses could take forever. It wasn’t “a little laggy.” It was the kind of thing that would test your patience in an actual consumer product.

And then there was cost.

Back in 2020, GPT-3 cost about $0.06 per 1,000 tokens. Importantly, that’s tokens you input and tokens it generates. Roughly speaking, that’s about 800 words per 1,000 tokens, so you’re talking about a few cents every time you do something substantial.

If you were a business—say a news org summarizing articles—that could be fine. If you’re summarizing an 800-word article and it costs around five or six cents, sure: totally workable for certain workflows.

But for a personal, consumer-oriented product where someone wants to use it constantly, it didn’t feel viable.

People burn through tokens fast. The average person can easily use millions of tokens a week if they’re really engaging. A coder can use hundreds of millions. So I did what anyone building a product should do: I opened spreadsheets and ran scenarios. I tried different pricing models. I tried different free tiers. I tried ad-supported fantasies. I tried everything.

And I kept coming back to the same conclusion: at $0.06 per 1,000 tokens, I didn’t like the business model for what I wanted to build.

Not because it couldn’t work at all—there were (and are) plenty of business use cases that absolutely justify that spend—but because I didn’t want to build a consumer app where I either:

- had to charge enough that it wouldn’t feel accessible, or

- couldn’t offer a free tier that felt genuinely useful, or

- would be constantly stressed about usage exploding and blowing up costs.

There was also another angle I didn’t love at the time: I could see a market emerging for “chatbots for lonely people to talk to.” And I’m not here to moralize about it—using AI for conversation and companionship is totally fine, especially now that the models are much more capable and can be genuinely helpful. But back then, I’d watched people using GPT-2-style chatbots for “AI companion” experiences, and it just wasn’t what I was excited to build. It wasn’t the productivity tool direction I wanted to chase.

So I didn’t launch.

Now, there’s obviously a world where I launch anyway, raise money, and bet that token costs would fall dramatically over time (which I did expect would happen). You can make that argument.

But there’s a difference between “this will probably get better later” and “this is something I want to stake years of my life on right now.”

Because the thing people miss about startups is that the money isn’t the only variable—time, stress, and responsibility are the real costs.

On the outside, there’s this story people tell themselves: you have an idea, you walk into a room, someone hands you a huge check, and then you’re set.

Reality is: if you raise money, it comes with weight. It’s not “you got rich.” It’s “you just accepted a burden.” Now you have employees, expectations, timelines, investors to answer to, and a product that has to survive the market. A lot of great founders love that. They’re built for it. But it’s a specific kind of life.

Around the same time I was thinking through all of this, I was asked if I wanted to take a job at OpenAI.

And my priorities were different. Money wasn’t the main thing for me. Learning was. Being around the people building the technology felt like the best possible place to be if I wanted to understand what was coming.

It’s also worth noting: I was in a comfortable position already. I’d been fortunate as an author and as an early-stage tech investor. I wasn’t making the decision from a place of “I need this app to work so I can pay rent.” That changes the equation. It allowed me to choose the path that maximized learning and proximity to the frontier.

So I joined OpenAI.

And after spending four years there in different roles—and now still getting to interact with my colleagues regularly as the host of the OpenAI Podcast—I feel good about that choice. I don’t really regret not launching AI Channels, even knowing what I know now about how big these categories became.

People outside the startup world sometimes have a hard time understanding how you can be happy with a really good outcome when there was also a possible insanely huge outcome on the table.

But “insanely huge outcome” comes bundled with an insanely huge set of tradeoffs. When I look at founders who put everything into what they’re building, I’m reminded that it’s a different risk/reward profile—and it requires a different kind of obsession. Building a startup is not just “building a product.” It’s building a company, and everything that comes with it.

At that stage of my life, I wanted to be part of a team, learn as fast as possible, and be close to the people inventing the future.

AI Channels was fun. It was early. It was a glimpse of what would become obvious later.

But the blockers in 2020 were real: speed and cost, more than intelligence. And given the choice between betting years on a product waiting for economics to catch up, versus joining the place where the economics and the models were going to be shaped in the first place—I chose the second path.

Your mileage may vary. But for me, it was the right call.